I’m late to the game on this, but I think it’s worth writing a bit about the new Managed Disks service in Azure.

For years, Amazon has had elastic Block Store (EBS)[footnote]I should point out that I know virtually nothing about AWS – I could be way off-base[/footnote]. EBS is a storage system dedicated to providing block storage volumes to VMs running in EC2. This made it simple to create an Amazon VM: pick an AMI (image), pick the storage type, and you’re practically finished. Azure, meanwhile, forced everyone through the trial of setting up an Azure Storage Account and then monitoring deployments to make sure that any VMs you delete also have the accompanying disks deleted – if necessary – and ensuring that the performance isn’t getting throttled by the Storage Account limits. Each Account has a set IOPS limit (20k IOPS for Standard Storage), and each blob (read: disk) has a set IOPS limit. If you deploy from the portal (which is where your average Azure user lives) you get a new storage account with a randomly-generated name that makes it really difficult to know where your VM Disks are – unless you pre-deploy the Storage Account. Once the potential Azure user has their head wrapped around that, they now had to choose whether to use standard or premium storage. Premium storage is faster, but comes with a ~5x price tag! So – simple – just change it if it’s needed, right? Unfortunately, this is no simple task.

All this was really confusing to the beginning Azure user. Fortunately, we now have managed disks. For the most part, they behave in exactly the same way as a VM Disk. However, they are a Resource in Azure Resource Manager, and they are simultaneously more flexible and easier to work with. You can now use copyIndex() to create multiple VM Disks, and attach them all to the VM – without having to manually specify each disk, for example.

For the Azure user who already has VMs in Azure, there’s two questions that I hear: “Can I convert?” and “Why should I convert?”. The latter question is easier to answer – the improved management of disks reduces the amount of administrator overhead. Any new VMs should definitely be created using the Managed Disks feature, and it’s very inconvenient to have two models of management. The question regarding converting VMs is a bit more nuanced. There’s one scenario that sticks out in my mind – while it’s possible to recover from on-premises to Azure Managed Disks, there currently is no way to use the native Azure Site Recovery capabilities recently released with Managed Disks. I’m told it’s a very high-priority item for the ASR team, and I hope to see this coming soon, as it will make it dramatically simpler to enable multi-region availability for IaaS deployments.

Another key benefit of Azure Managed Disks is improved availability. There’s no improved SLA per se at this time, but when you deploy VMs into an Availability Set with Managed Disks, the AS is aligned with the storage fabric, ensuring that the VM hosts as well as storage hosts are distributed. With the old model, there was no guarantee that the storage fabric was aligned with your VM fabric from an Update/Fault Domain standpoint. Hopefully there will be an updated SLA once MS can collect some data on what is really seen in the real world.

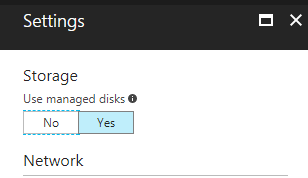

When creating a new VM, it’s very easy to simply select “Use Managed Disks” when deploying a new VM.

For templates, there’s only a few changes to be made. You’ll need to update any availabilitySet to use a supported apiVersion (I’m partial to 2016-04-30-preview, having had some challenges with the newer one) and add some properties to the AvSet. One restriction to note is that once you set “managed” to “true” you’re limited to 2 fault domains and update domains! Note that the preferred notation is now to use a SKU property, which allos for a bit more flexibility.

{

"apiVersion": "2016-04-30-preview",

"type": "Microsoft.Compute/availabilitySets",

"name": "[variables('availabilitySetName')]",

"location": "[resourceGroup().location]",

"properties": {

"platformFaultDomainCount": "2",

"platformUpdateDomainCount": "2"

}

"sku": {

"name": "Aligned"

}

},

There’s two options for creating Managed Disks inside a template – implicit creation or explicit creation. Implicit creation fits current templates more neatly, and explicit creation gives the template author more flexibility in the future.

An example of implicit creation (within the context of a VM resource!). Basically, create an OSDisk or DataDisk without specifying a Storage Account and URL.

{

"apiVersion": "2016-04-30-preview",

"type": "Microsoft.Compute/virtualMachines",

"name": "[concat(parameters('vmNamePrefix'), copyindex())]",

"copy": {

"name": "virtualMachineLoop",

"count": "[variables('numberOfInstances')]"

},

"storageProfile": {

"imageReference": {

"publisher": "[parameters('imagePublisher')]",

"offer": "[parameters('imageOffer')]",

"sku": "[parameters('imageSKU')]",

"version": "latest"

},

"osDisk": {

"createOption": "FromImage"

}

}

[...]

}

The other option explicitly declares the disks as independent resources, then attaches it to the VM by reference.

{

"type": "Microsoft.Compute/disks",

"name": "[concat(variables('vmName'),'-datadisk1')]",

"apiVersion": "2016-04-30-preview",

"location": "[resourceGroup().location]",

"properties": {

"creationData": {

"createOption": "Empty"

},

"accountType": "Standard_LRS",

"diskSizeGB": 1023

}

}

Then, the VM dataDisks object will look like this:

"dataDisks": [

{

"lun": 0,

"name": "[concat(variables('vmName'),'-datadisk1')]",

"createOption": "attach",

"managedDisk": {

"id": "[resourceId('Microsoft.Compute/disks/', concat(variables('vmName'),'-datadisk1'))]"

}

}

]

There’s a list of Managed Disk Templates available on the excellent Github repository for Azure Templates here: https://github.com/Azure/azure-quickstart-templates/blob/master/managed-disk-support-list.md.

VM disk encryption behaves in exactly the same way for managed disks that it does for unmanaged disks. It’s not simple, but it’s effective. Server Side Encryption is also now available, which makes it far easier to check the “encrypted at rest”.

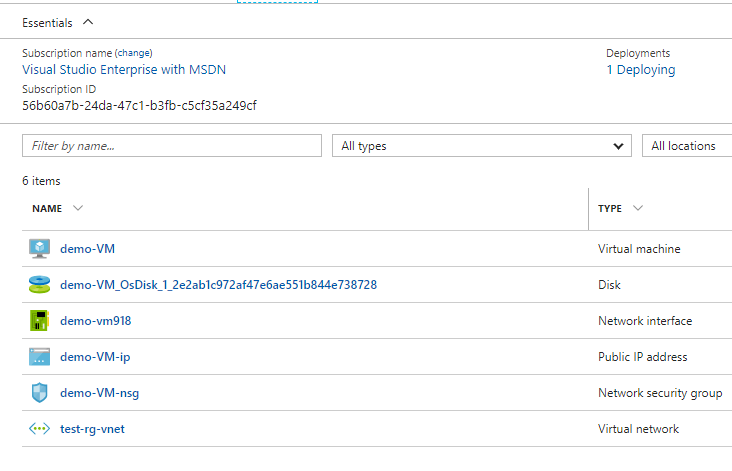

Unfortunately, deploying from the Portal still results in disks that have a lot of unnecessary characters. This can easily be managed by deploying through PowerShell or preferably, ARM templates to ensure you follow a naming convention.

Deploying from the Portal still gives you the long name for the Disk, but it’s clearly visible.

One last thing to consider before I wrap up this post – Managed Disks are billed like Premium Storage. That is, they have SKUs and you are billed not on what you use, but what is provisioned. This is a departure from the previous Storage Account construct. However, it is now easy to resize disks – and they now go up to 4TB – so start with a 32GB disk and move up as required if cost is very important to you. The Disk SKUs and pricing are here.